Microsoft engineers have introduced an AI (artificial intelligence) model for text-to-speech called VALL-E. It is able to imitate a human voice, relying only on a three-second sound sample.

The developers claim that VALL-E can synthesize audio, where the “learned” voice says something, while retaining even the emotional coloring.

You might also be interested in our article: Why Can Voice Assistants Be Dangerous? And also, for example, How to Block Scam Likely Calls on iPhone and Android.

And also let me remind you that the media wrote that Attackers use voice changing software to deceive their victims.

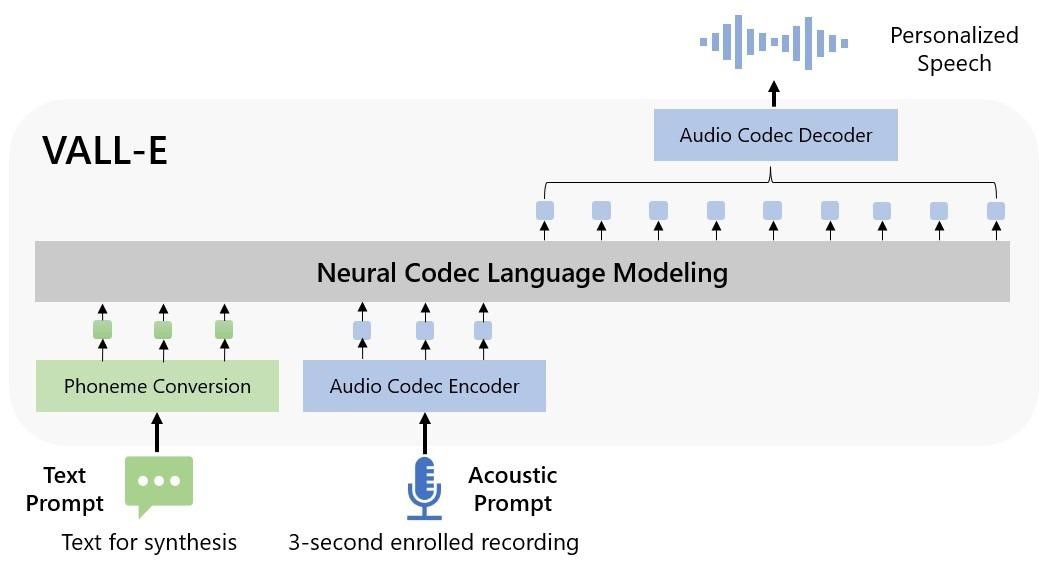

The creators call VALL-E a “neural codec language model” and believe that the novelty can be used for high-quality text-to-speech applications, speech editing, when a speech recording can be edited and changed from a text transcript (that is, a person will “say” something they didn’t originally say), as well as creating audio content in combination with other generative AI models, such as GPT-3 (behind the sensational ChatGPT).

VALL-E is based on the EnCodec technology that Meta announced in October 2022. Unlike other text-to-speech methods, VALL-E generates discrete audio codec codes from text and received acoustic cues.

Essentially, VALL-E analyzes what a person sounds like, breaks that information down into discrete components (called “tokens”) with EnCodec, and uses the training data to correlate what it “knows” about how that voice would sound if it were spoke other phrases outside of the three-second pattern.

Microsoft taught VALL-E speech synthesis on the LibriLight sound library, which contains 60,000 hours of English speech from over 7,000 media (mostly taken from public domain audiobooks on LibriVox). For VALL-E to perform well, the voice in the 3-second sample must be similar to the voice in this training data.

On a special Microsoft website, dozens of examples of VALL-E work are given.

Interestingly, in addition to preserving the timbre and emotional tone of the speaker, VALL-E can also simulate “acoustic surroundings” from an audio sample. That is, if the sample is taken, for example, from a phone call, the VALL-E version can also sound like a call recording, with all the corresponding distortions and nuances.

Since VALL-E can clearly be used for a wide variety of abuse and fraud, until Microsoft releases the source code of its development and notes that in the future it is possible to create a model for detecting audio content generated using VALL-E.